AI Fundamentals Oxford: A Complete Guide to Core Concepts, Learning Paths, and Real-World Applications

The AI fundamentals Oxford teaches across its programmes represent some of the most rigorous and well-structured introductions to the field available anywhere in the world. This guide breaks down those core concepts, maps a clear learning roadmap, and shows how AI fundamentals translate into real-world systems like ChatGPT and Tesla’s Autopilot. Artificial intelligence is no longer a futuristic abstraction, it is the defining technology of our era, and understanding its foundational principles has become essential for anyone working in technology, research, or business.

What Are the AI Fundamentals You Need to Know?

At their core, AI fundamentals refer to the foundational concepts, mathematical frameworks, and algorithmic paradigms that underpin every modern artificial intelligence system. Whether you are studying at Oxford, working through an online programme, or teaching yourself through open-source resources, the same pillars apply: machine learning, deep learning, natural language processing, computer vision, and robotics. These are not isolated disciplines, they overlap, reinforce one another, and together form the intellectual infrastructure of the entire AI field.

The AI fundamentals Oxford emphasises in its Department of Computer Science curriculum are grounded in both theoretical rigour and practical application. As Professor Michael Wooldridge, head of Oxford’s Department of Computer Science and author of A Brief History of Artificial Intelligence, has noted, “You cannot meaningfully contribute to AI, whether in research or industry, without a deep understanding of the mathematical and computational principles that make it work.” This philosophy shapes how Oxford structures its AI education, and it is the framework we follow throughout this guide.

The Core Pillars of AI Fundamentals

Machine Learning: The Engine Behind Modern AI

Machine learning is the subfield of AI that enables systems to learn from data without being explicitly programmed for every task. It is, by virtually every measure, the most commercially important branch of AI today. According to Stanford’s 2025 AI Index Report, global corporate investment in machine learning infrastructure exceeded $100 billion in 2024, with adoption accelerating across healthcare, finance, and manufacturing.

Machine learning divides into three primary paradigms:

Understanding these three categories is the first true milestone in mastering AI fundamentals. Oxford’s introductory ML modules cover all three, with a particular emphasis on the mathematical underpinnings, probability theory, linear algebra, and optimisation, which make them work.

Deep Learning and Neural Networks

Deep learning is a subset of machine learning that uses artificial neural networks with multiple layers (hence “deep”) to model complex patterns in large datasets. The breakthrough insight that stacking layers of simple computational units can approximate extraordinarily complex functions has driven nearly every major AI advance of the past decade.

The architecture that changed everything is the transformer, introduced in the landmark paper “Attention Is All You Need” by Vaswani et al. (2017), published on arXiv. The transformer replaced recurrence with a self-attention mechanism, enabling models to process entire sequences in parallel and capture long-range dependencies far more effectively than previous architectures like LSTMs.

Real-World Example: How Tesla Uses Deep Learning for Computer Vision

Tesla’s Autopilot system is one of the most visible real-world applications of deep learning fundamentals. Tesla uses convolutional neural networks (CNNs) and, more recently, transformer-based architectures to process video feeds from eight cameras simultaneously. The system performs object detection, lane recognition, depth estimation, and trajectory prediction, all in real time. Notably, Tesla relies entirely on vision-based AI, having removed radar and LiDAR from its sensor suite.

This approach demonstrates supervised learning (trained on millions of labelled driving clips) combined with reinforcement learning (optimising driving behaviour through simulation). It is a powerful case study in how AI fundamentals Oxford teaches, linear algebra, optimisation, neural network architecture, translate directly into production systems.

Natural Language Processing (NLP)

Natural language processing is the branch of AI concerned with enabling machines to understand, generate, and interact using human language. NLP has undergone a revolution since the introduction of transformer-based models, moving from rule-based systems and statistical methods to end-to-end neural approaches.

The key concepts within NLP fundamentals include:

Real-World Example: How ChatGPT Uses Transformers:

ChatGPT, built by OpenAI, is perhaps the most widely recognised application of NLP fundamentals. As detailed in OpenAI’s GPT-4 technical report, the system is a large-scale transformer model trained in two stages. First, it undergoes pre-training on vast corpora of text, learning to predict the next token in a sequence. Then it is fine-tuned using Reinforcement Learning from Human Feedback (RLHF), where human evaluators rank outputs and the model adjusts its behaviour accordingly.

When you type a prompt into ChatGPT, the model tokenises your input, processes it through dozens of transformer layers using self-attention, and generates a response one token at a time. Understanding this pipeline, tokenisation, embedding, attention, and generation is a core component of the AI fundamentals Oxford covers in its NLP modules.

Computer Vision

Computer vision enables machines to interpret and make decisions based on visual data, images, video, and 3D point clouds. The fundamental tasks include image classification, object detection, semantic segmentation, and depth estimation.

Research from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) has been instrumental in advancing vision transformers (ViTs), which apply the same self-attention mechanism used in NLP to patches of an image. This cross-pollination between NLP and computer vision is one of the most exciting trends in current AI research. It underscores why a strong grasp of AI fundamentals, particularly linear algebra and attention mechanisms, is so valuable.

Robotics and AI Agents

Robotics brings AI into the physical world. A robotic AI system must perceive its environment (computer vision, LiDAR), make decisions (planning, reinforcement learning), and act (motor control). The perception-action loop is the fundamental abstraction.

The Oxford Robotics Institute (ORI) is one of the world’s leading research centres in this space, contributing breakthroughs in autonomous navigation, manipulation, and multi-agent coordination. Their work demonstrates how the theoretical AI fundamentals Oxford teaches in lecture halls directly feed into cutting-edge research on real robots operating in unstructured environments.

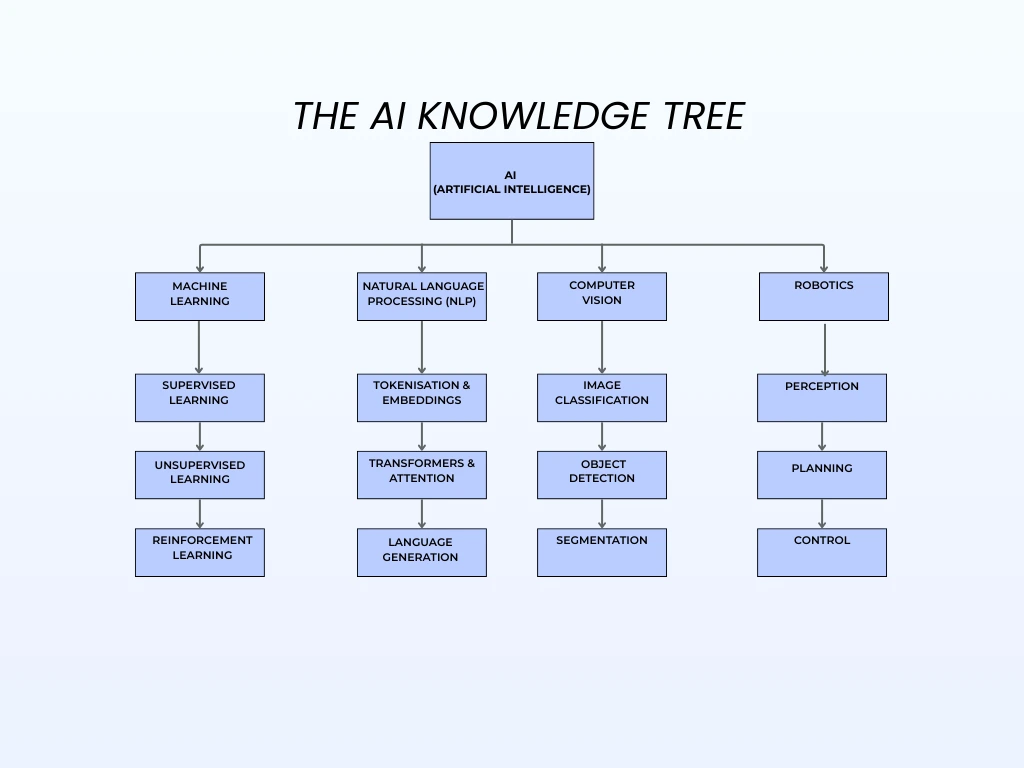

The AI Knowledge Tree: How Every Subfield Connects

One of the most important realisations when studying AI fundamentals is that every subfield is interconnected. The following taxonomy illustrates how the core branches relate to one another:

This tree is not just a visual aid; it reflects the actual dependency structure of the field. You cannot understand transformers without understanding embeddings. You cannot understand reinforcement learning without understanding optimisation. And you cannot build a robotic AI system without understanding both computer vision and planning. This is precisely why the AI fundamentals Oxford structures its curriculum around building from the mathematical base upward.

Related Questions Users Ask

What Programming Languages Do I Need to Learn AI Fundamentals?

Python is the undisputed primary language for AI. Its ecosystem, NumPy, Pandas, Scikit-learn, PyTorch, TensorFlow, Hugging Face Transformers, covers every stage from data preprocessing to model deployment. For students approaching AI fundamentals Oxford-style, Python is the starting point.

Beyond Python, R remains useful for statistical analysis and data visualisation, and Julia is gaining traction in high-performance scientific computing and AI research. However, for the vast majority of learners, deep proficiency in Python alone will carry you through every fundamental concept and well into advanced applications.

How Long Does It Take to Learn AI Fundamentals?

This depends heavily on your starting point:

| Background | Estimated Time to Solid Fundamentals |

|---|---|

| CS / Mathematics degree holder | 3-6 months |

| Working developer (no ML experience) | 6-9 months |

| Career switcher (non-technical background) | 12-18 months |

Oxford’s own AI programmes, such as the Oxford Artificial Intelligence Programme offered through Saïd Business School, typically run for 6 to 12 weeks of intensive study. However, these programmes assume some prior quantitative literacy. Self-directed learners can follow a similar timeline using open resources like MIT OpenCourseWare, fast.ai, and Andrew Ng’s Coursera specialisations.

What’s the Difference Between AI, Machine Learning, and Deep Learning?

This is one of the most frequently confused distinctions, and getting it right is essential to understanding AI fundamentals.

| Term | Definition | Relationship |

|---|---|---|

| Artificial Intelligence | The broadest field, any system that exhibits intelligent behaviour | Parent category |

| Machine Learning | A subset of AI, systems that learn from data | Subset of AI |

| Deep Learning | A subset of ML uses multi-layer neural networks | Subset of ML |

As Russell and Norvig explain in Artificial Intelligence: A Modern Approach, the standard textbook used across Oxford, Stanford, and MIT, AI encompasses everything from simple rule-based expert systems to complex neural networks. Machine learning is the most successful modern approach to achieving AI, and deep learning is the most successful modern approach to machine learning.

Is Oxford’s AI Programme Worth It Compared to Free Resources?

This depends on your goals, budget, and learning style.

| Factor | Oxford Programme | Free Resources (fast.ai, MIT OCW, Coursera) |

|---|---|---|

| Credential value | High (Oxford brand, certificate) | Low to moderate |

| Structure & accountability | High (cohort-based, deadlines) | Self-directed |

| Depth of theory | Very high | Varies widely |

| Networking | Strong (peers, alumni) | Limited |

| Cost | £2,000-£15,000+ | Free or under £50/month |

For professionals seeking career advancement or a credential that carries weight, the AI fundamentals Oxford offers through its formal programmes provide clear value. For self-motivated developers who learn well independently, free resources can deliver equivalent technical knowledge at a fraction of the cost.

What Career Paths Open Up After Learning AI Fundamentals?

Mastering AI fundamentals opens doors to some of the most in-demand and well-compensated roles in the global economy. According to Stanford’s 2025 AI Index Report, demand for AI-skilled professionals has grown year-over-year in every major economy since 2018.

Key career paths include:

The AI Fundamentals Learning Roadmap

This roadmap is designed for students, professionals, and developers who want a structured path from zero to competence in AI fundamentals. It reflects the progression that Oxford and other leading institutions recommend.

Expert Insights: What Leading AI Researchers Say

The importance of strong fundamentals is a recurring theme among the world’s most respected AI researchers. Professor Michael Wooldridge, head of Oxford’s Department of Computer Science, has consistently argued that the rush to apply AI without understanding its foundations leads to brittle systems and flawed decision-making. In A Brief History of Artificial Intelligence, he writes that genuine progress in AI has always come from researchers who understood the mathematical and philosophical foundations deeply, not from surface-level tool users.

Professor Yoshua Bengio, Turing Award laureate and scientific director of Mila (the Quebec AI Institute), has emphasised that deep learning’s power comes precisely from its mathematical elegance, and that students who skip the fundamentals inevitably hit a ceiling. His co-authored textbook Deep Learning (with Goodfellow and Courville) remains one of the definitive references for anyone studying AI fundamentals at an Oxford-equivalent level.

Research from MIT CSAIL has reinforced these views with data. Their studies on AI education effectiveness show that students who spend more time on mathematical foundations before touching code perform significantly better in advanced coursework and produce more robust models in practice.

These perspectives converge on a single point: whether you are studying AI fundamentals Oxford-style or through self-directed learning, the depth of your foundational understanding determines the height of your eventual expertise.

Key Takeaways

Mastering AI fundamentals is essential for anyone who wants to work meaningfully in technology, research, or data-driven business in 2026 and beyond. Core pillars machine learning, deep learning, natural language processing, computer vision, and robotics form an interconnected system where understanding one area strengthens the others. The rigorous approach taught at leading institutions such as University of Oxford emphasizes strong mathematical foundations and theoretical depth, but the same knowledge can be built through open-source courses and classic texts like Artificial Intelligence: A Modern Approach by Stuart Russell and Peter Norvig.

These fundamentals power real-world systems used by billions, from transformer-based models like ChatGPT that rely on tokenization, embeddings, and self-attention, to autonomous systems such as Tesla Autopilot that combine convolutional neural networks and reinforcement learning for real-time perception and decision-making.

For students and professionals, the roadmap is clear: begin with Python and core mathematics, move into classical machine learning, advance toward deep learning, and then specialize while building research literacy through platforms like arXiv and research from institutions such as Stanford University and Massachusetts Institute of Technology. Rather than chasing every new tool or framework, professionals should focus on strengthening the fundamentals most relevant to their roles. The real competitive advantage in AI comes from understanding why algorithms work not just how to implement them, because the foundations taught in rigorous programs remain the backbone of every modern AI system.

FAQs

Is the Oxford Course worth it?

Yes, the course from University of Oxford can be worth it if you want a structured understanding of AI fundamentals Oxford programs teach and how AI applies to real-world industries. It is especially useful for professionals, managers, and decision-makers who want strategic knowledge rather than deep technical coding skills. However, developers may still need additional training in programming, mathematics, and machine learning beyond the AI fundamentals Oxford course.

Which is better, MIT or Oxford?

Both are world-class, but the “better” choice depends on your goal. Massachusetts Institute of Technology is often ranked #1 globally for AI and data science, making it slightly stronger for cutting-edge AI research and engineering. University of Oxford is equally prestigious and sometimes ranks #1 for computer science overall, known for strong theoretical foundations and research depth. For AI fundamentals Oxford programs, Oxford is excellent academically, but MIT is usually considered stronger for practical AI innovation and industry impact.

Is the Oxford AI Course free

No, the official AI fundamentals Oxford course from University of Oxford is not free. The well-known Oxford Artificial Intelligence Programme is a 6-week online course that costs about £2,450. However, there are some beginner AI courses online that are free, though they are not the same as the official Oxford executive program. So, the main AI fundamentals Oxford program is paid, while free introductory AI resources exist elsewhere.